This is a collection of old blog posts, going back to 2006. For some strange reason I thought it would be a good idea to have two blogs. They have been migrated here from blogs.olsen.ch.

a philosophy of science primer - part III

See

- part I: some history of science and logical empiricism,

- part II: problems of logical empiricism, critical rationalism and its problems.

After the unsuccessful attempts to found science on common sense notions as seen in the programs of logical empiricism and critical rationalism, people looked for new ideas and explanations.

The Kuhnian View

Thomas Kuhn’s enormously influential work on the history of science is called the Structure of Scientific Revolutions. He revised the idea that science is an incremental process accumulating more and more knowledge. Instead, he identified the following phases in the evolution of science:

- prehistory: many schools of thought coexist and controversies are abundant,

- history proper: one group of scientists establishes a new solution to an existing problem which opens the doors to further inquiry; a so called paradigm emerges,

- paradigm based science: unity in the scientific community on what the fundamental questions and central methods are; generally a problem solving process within the boundaries of unchallenged rules (analogy to solving a Sudoku),

- crisis: more and more anomalies and boundaries appear; questioning of established rules,

- revolution: a new theory and weltbild takes over solving the anomalies and a new paradigm is born.

Another central concept is incommensurability, meaning that proponents of different paradigms cannot understand the other’s point of view because they have diverging ideas and views of the world. In other words, every rule is part of a paradigm and there exist no trans-paradigmatic rules.

This implies that such revolutions are not rational processes governed by insights and reason. In the words of Max Planck (the founder of quantum mechanics; from his autobiography):

A new scientific truth does not triumph by convincing its opponents and making them see the light, but rather because its opponents eventually die, and a new generation grows up that is familiar with it.

Kuhn gives additional blows to a commonsensical foundation of science with the help of Norwood Hanson and Willard Van Orman Quine:

- every human observation of reality contains an a priori theoretical framework,

- underdetermination of belief by evidence: any evidence collected for a specific claim is logically consistent with the falsity of the claim,

- every experiment is based on auxiliary hypotheses (initial conditions, proper functioning of apparatus, experimental setup,…).

People slowly started to realize that there are serious consequences in Kuhn’s ideas and the problems faced by the logical empiricists and critical rationalists in establishing a sound logical and empirical foundation of science:

- postmodernism,

- constructivism or the scoiology of science,

- relativism.

Postmodernism

Modernism describes the development of Western industrialized society since the beginning of the 19th Century. A central idea was that there exist objective true beliefs and that progression is always linear.

Postmodernism replaces these notions with the belief that many different opinions and forms can coexist and all find acceptance. Core ideas are diversity, differences and intermingling. In the 1970s it is seen to enter scientific and cultural thinking.

Postmodernism has taken a bad rap from scientists after the so called Sokal affair, where physicist Alan Sokal got a nonsensical paper published in the journal of postmodern cultural studies, by flattering the editors ideology with nonsense that sounds good.

Postmodernims has been associated with scepticism and solipsism, next to relativism and constructivism.

Notable scientists identifiable as postmodernists are Thomas Kuhn, David Bohm and many figures in the 20th century philosophy of mathematics. As well as Paul Feyerabend, an influential philosopher of science.

Constructivism

To quote the Nobel laureate Steven Weinberg on Kuhnian revolutions:

If the transition from one paradigm to another cannot be judged by any external standard, then perhaps it is culture rather than nature that dictates the content of scientific theories.

Constructivism excludes objectivism and rationality by postulating that beliefs are always subject to a person’s cultural and theological embedding and inherent idiosyncrasies. It also goes under the label of the sociology of science.

In the words of Paul Boghossian (in his book Fear of Knowledge: Against Relativism and Constructivism):

Constructivism about rational explanation: it is never possible to explain why we believe what we believe solely on the basis of our exposure to the relevant evidence; our contingent needs and interests must also be invoked.

The proponents of constructivism go further:

[…] all beliefs are on a par with one another with respect to the causes of their credibility. It is not that all beliefs are equally true or equally false, but that regardless of truth and falsity the fact of their credibility is to be seen as equally problematic.

From Barry Barnes’ and David Bloor’s Relativism, Rationalism and the Sociology of Knowledge.

In its radical version, constructivism fully abandons objectivism:

- Objectivity is the illusion that observations are made without an observer(from the physicist Heinz von Foerster; my translation)

- Modern physics has conquered domains that display an ontology that cannot be coherently captured or understood by human reasoning (from the philosopher Ernst von Glasersfeld); my translation

In addition, radical constructivism proposes that perception never yields an image of reality but is always a construction of sensory input and the memory capacity of an individual. An analogy would be the submarine captain who has to rely on instruments to indirectly gain knowledge from the outside world. Radical constructivists are motivated by modern insights gained by neurobiology.

Historically, Immanuel Kant can be understood as the founder of constructivism. On a side note, the bishop George Berkeley went even as far as to deny the existence of an external material reality altogether. Only ideas and thought are real.

Relativism

Another consequence of the foundations of science lacking commonsensical elements and the ideas of constructivism can be seen in the notion of relativism. If rationality is a function of our contingent and pragmatic reasons, then it can be rational for a group A to believe P, while at the same time it is rational for group B to believe in negation of P.

Although, as a philosophical idea, relativism goes back to the Greek Protagoras, its implications are unsettling for the Western mid:anything goes (as Paul Feyerabend characterizes his idea of scientific anarchy). If there is no objective truth, no absolute values, nothing universal, then a great many of humanity’s century old concepts and beliefs are in danger.

It should however also be mentioned, that relativism is prevalent in Eastern thought systems, and as an example found in many Indian religions. In a similar vein, pantheism and holism are notions which are much more compatible with Eastern thought systems than Western ones.

Furthermore, John Stuart Mill’s arguments for liberalism appear to also work well as arguments for relativism:

- fallibility of people’s opinions,

- opinions that are thought to be wrong can contain partial truths,

- accepted views, if not challenged, can lead to dogmas,

- the significance and meaning of accepted opinions can be lost in time.

From his book On Liberty.

Epilogue

But could relativism be possibly true? Consider the following hints:

- Epistemological

- problems with perception: synaesthesia, altered states of consciousness (spontaneous, mystical experiences and drug induced),

- psychopathology describes a frightening amount of defects in the perception of reality and ones self,

- people suffering from psychosis or schizophrenia can experience a radically different reality,

- free will and neuroscience,

- synthetic happiness,

- cognitive biases.

- Ontological

- nonlocal foundation of quantum reality: entanglement, delayed choice experiment,

- illogical foundation of reality: wave-particle duality, superpositions, uncertainty, intrinsic probabilistic nature, time dilation (special relativity), observer/measurment problem in quantum theory,

- discreteness of reality: quanta of energy and matter, constant speed of light,

- nature of time: not present in fundamental theories of quantum gravity, symmetrical,

- arrow of time: why was the initial state of the universe very low in entropy?

- emergence, selforganization and structureformation.

In essence, perception doesn’t necessarily say much about the world around us. Consciousness can fabricate reality. This makes it hard to be rational. Reality is a really bizarre place. Objectivity doesn’t seem to play a big role.

And what about the human mind? Is this at least a paradox free realm? Unfortunately not. Even what appears as a consistent and logical formal thought system, i.e., mathematics, can be plagued by fundamental problems. Kurt Gödel proved that in every consistent non-contradictory system of mathematical axioms (leading to elementary arithmetic of whole numbers), there exist statements which cannot be proven or disproved in the system. So logical axiomatic systems are incomplete.

As an example Bertrand Russell encountered the following paradox: let R be the set of all sets that do not contain themselves as members. Is R an element of itself or not?

If you really accede to the idea that reality and the perception of reality by the human mind are very problematic concepts, then the next puzzles are:

- why has science been so fantastically successful at describing reality?

- why is science producing amazing technology at breakneck speed?

- why is our macroscopic, classical level of reality so well behaved and appears so normal although it is based on quantum weirdness?

- are all beliefs justified given the believers biography and brain chemistry?

a philosophy of science primer - part II

Continued from part I…

The Problems With Logical Empiricism

The programme proposed by the logical empiricists, namely that science is built of logical statements resting on an empirical foundation, faces central difficulties. To summarize:

- it turns out that it is not possible to construct pure formal concepts that solely reflect empirical facts without anticipating a theoretical framework,

- how does one link theoretical concepts (electrons, utility functions in economics, inflational cosmology, Higgs bosons,…) to experiential notions?

- how to distinguish science from pseudo-science?

Now this may appear a little technical and not very interesting or fundamental to people outside the field of the philosophy of science, but it gets worse:

- inductive reasoning is invalid from a formal logical point of view!

- causality defies standard logic!

This is big news. So, just because I have witnessed the sun going up everyday of my life (single observations), I cannot say it will go up tomorrow (general law). Observation alone does not suffice, you need a theory. But the whole idea here is that the theory should come from observation. This leads to the dead end of circular reasoning.

But surely causality is undisputable? Well, apart from the problems coming from logic itself, there are extreme examples to be found in modern physics which undermine the common sense notion of a causal reality: quantum nonlocality, delayed choice experiment.

But challenges often inspire people, so the story continues…

Critical Rationalism

OK, so the logical empiricists faced problems. Can’t these be fixed? The critical rationalists belied so. A crucial influence came from René Descartes’ and Gottfried Leibniz’ rationalism: knowledge can have aspects that do not stem from experience, i.e., there is an immanent reality to the mind.

The term critical refers to the fact, that insights gained by pure thought cannot be strictly justified but only critically tested with experience. Ultimate justifications lead to the so called Münchhausen trilemma, i.e., one of the following:

- an infinite regress of justifications,

- circular reasoning,

- dogmatic termination of reasoning.

The most influential proponent of critical rationalism was Karl Popper. His central claims were in essence

- use deductive reasoning instead of induction,

- theories can never be verified, only falsified.

Although there are similarities with logical empiricism (empirical basis, science is a set of theoretical constructs), the idea is that theories are simply invented by the mind and are temporarily accepted until they can be falsified. The progression of science is hence seen as evolutionary process rather than a linear accumulation of knowledge.

Sounds good, so what went wrong with this ansatz?

The Problems With Critical Rationalism

In a nutshell:

- basic formal concepts cannot be derived from experience without induction; how can they be shown to be true?

- deduction turns out to be just as tricky as induction,

- what parts of a theory need to be discarded once it is falsified?

To see where deduction breaks down, a nice story by Lewis Carroll (the mathematician who wrote the Alice in Wonderland stories): What the tortoise Said to Achilles.

If deduction goes down the drain as well, not much is left to ground science on notions of logic, rationality and objectivity. Which is rather unexpected of an enterprise that in itself works amazingly well employing just these concepts.

Explanations in Science

And it gets worse. Inquiries into the nature of scientific explanation reveal further problems. It is based on Carl Hempel’s and Paul Oppenheim’s formalisation of scientific inquiry in natural language. Two basic schemes are identified: deductive-nomological and inductive-statistical explanations. The idea is to show that what is being explained (the explanandum) is to be expected on the grounds of these two types of explanations.

The first tries to explain things deductively in terms of regularities and exact laws (nomological). The second uses statistical hypotheses and explains individual observations inductively. Albeit very formal, this inquiry into scientific inquiry is very straightforward and commonsensical.

Again, the programme fails:

- can’t explain singular causal events,

- asymmetric (a change in the air pressure explains the readings on a barometer, however, the barometer doesn’t explain why the air pressure changed),

- many explanations are irrelevant,

- as seen before, inductive and deductive logic is controversial,

- how to employ probability theory in the explanation?

So what next? What are the consequences of these unexpected and spectacular failings of the most simplest premises one would wish science to be grounded on (logic, empiricism, causality, common sense, rationality, …)?

The discussion is ongoing and isn’t expected to be resolved soon. Seepart III…

a philosophy of science primer - part I

Naively one would expect science to adhere to two basic notions:

- common sense, i.e., rationalism,

- observation and experiments, i.e., empiricism.

Interestingly, both concepts turn out to be very problematic if applied to the question of what knowledge is and how it is acquired. In essence, they cannot be seen as a foundation for science.

But first a little history of science…

Classical Antiquity

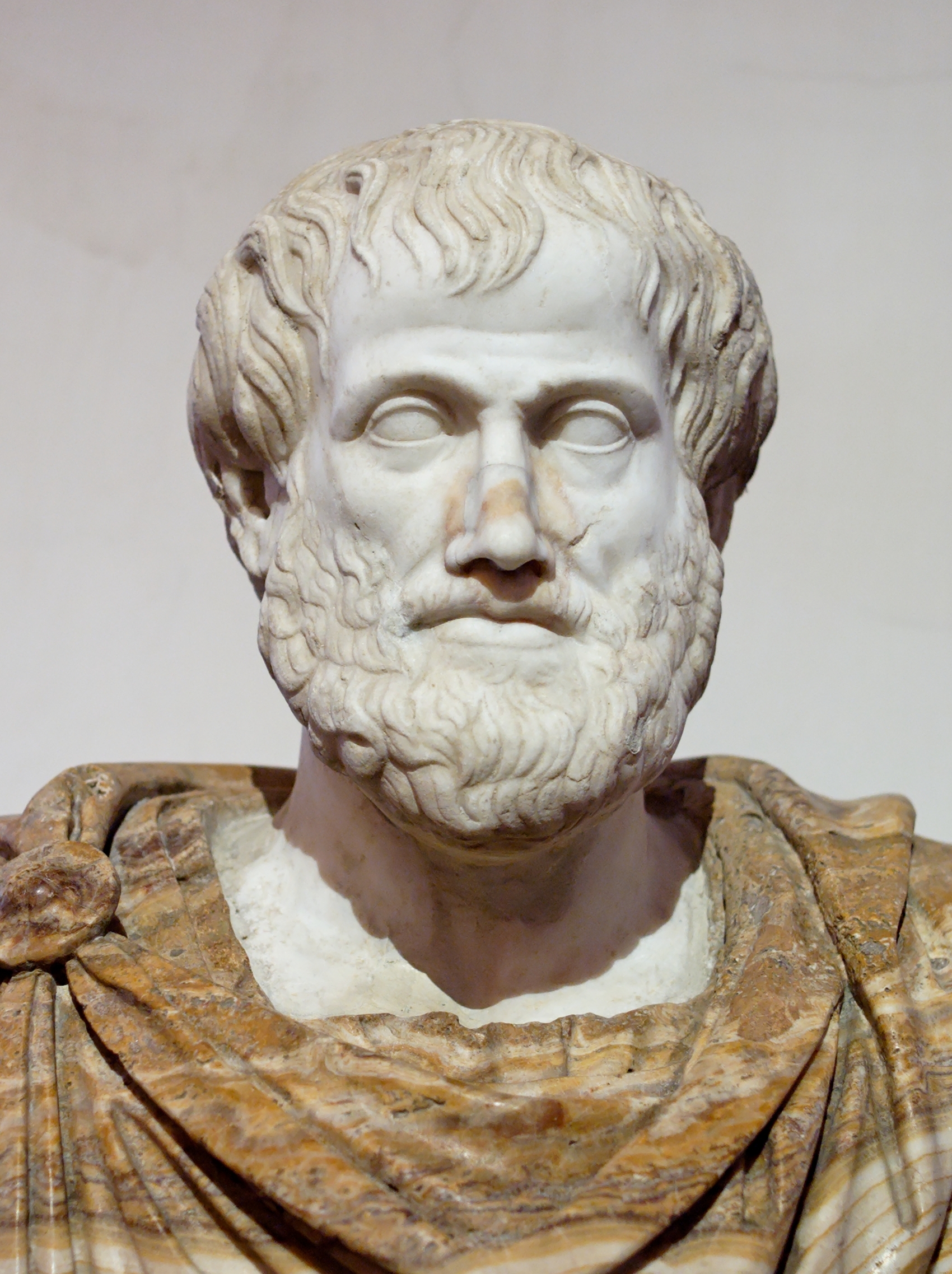

The Greek philosopher Aristotle was one of the first thinkers to introduce logic as a means of reasoning. His empirical method was driven by gaining general insights from isolated observations. He had a huge influence on the thinking within the Islamic and Jewish traditions next to shaping Western philosophy and inspiring thinking in the physical sciences.

Modern Era

Nearly two thousand years later, not much changed. Francis Bacon (the philosopher, not the painter) made modifications to Aristotle’s ideas, introducing the so called scientific method where inductive reasoning plays an important role. He paves the way for a modern understanding of scientific inquiry.

Approximately at the same time, Robert Boyle was instrumental in establishing experiments as the cornerstone of physical sciences.

Logical Empiricism

So far so good. By the early 20th Century the notion that science is based on experience (empiricism) and logic, and where knowledge is intersubjectively testable, has had a long history.

The philosophical school of logical empiricism (or logical positivism) tries to formalise these ideas. Notable proponents were Ernst Mach, Ludwig Wittgenstein, Bertrand Russell, Rudolf Carnap, Hans Reichenbach, Otto Neurath. Some main influences were:

- David Hume’s and John Locke’s empiricism: all knowledge originates from observation, nothing can exist in the mind which wasn’t before in the senses,

- Auguste Comte’ and John Stuart Mills’ positivism: there exists no knowledge outside of science.

In this paradigm (see Thomas Kuhn a little later) science is viewed as a building comprised of logical terms based on an empirical foundation. A theory is understood as having the following structure: observation -> empirical concepts -> formal notions -> abstract law. Basically a sequence of ever higher abstraction.

This notion of unveiling laws of nature by starting with individual observations is called induction (the other way round, starting with abstract laws and ending with a tangible factual description is called deduction, see further along).

And here the problems start to emerge. See part II…

Stochastic Processes and the History of Science: From Planck to Einstein

How are the notions of randomness, i.e., stochastic processes, linked to theories in physics and what have they got to do with options pricing in economics?

How did the prevailing world view change from 1900 to 1905?

What connects the mathematicians Bachelier, Markov, Kolmogorov, Ito to the physicists Langevin, Fokker, Planck, Einstein and the economists Black, Scholes, Merton?

The Setting

- Science up to 1900 was in essence the study of solutions of differential equations (Newton’s heritage);

- Was very successful, e.g., Maxwell’s equations: four differential equations describing everything about (classical) electromagnetism;

- Prevailing world view:

- Deterministic universe;

- Initial conditions plus the solution of differential equation yield certain prediction of the future.

Three Pillars

By the end of the 20th Century, it became clear that there are (at least?) two additional aspects needed in a completer understanding of reality:

- Inherent randomness: statistical evaluations of sets of outcomes of single observations/experiments;

- Quantum mechanics (Planck 1900; Einstein 1905) contains a fundamental element of randomness;

- In chaos theory (e.g., Mandelbrot 1963) non-linear dynamics leads to a sensitivity to initial conditions which renders even simple differential equations essentially unpredictable;

- Complex systems (e.g., Wolfram 1983), i.e., self-organization and emergent behavior, best understood as outcomes of simple rules.

Stochastic Processes

- Systems which evolve probabilistically in time;

- Described by a time-dependent random variable;

- The probability density function describes the distribution of the measurements at time t;

- Prototype: The Markov process.

For a Markov process, only the present state of the system influences its future evolution: there is no long-term memory. Examples:

- Wiener process or Einstein-Wiener process or Brownian motion:

- Introduced by Bachelier in 1900;

- Continuous (in t and the sample path)

- Increments are independent and drawn from a Gaussian normal distribution;

- Random walk:

- Discrete steps (jumps), continuous in t;

- Is a Wiener process in the limit of the step size going to zero.

To summarize, there are three possible characteristics:

- Jumps (in sample path);

- Drift (of the probability density function);

- Diffusion (widening of the probability density function).

Probability distribution function showing drift and diffusion:

But how to deal with stochastic processes?

The Micro View

Einstein:

- Presented a theory of Brownian motion in 1905;

- New paradigm: stochastic modeling of natural phenomena; statistics as intrinsic part of the time evolution of system;

- Mean-square displacement of Brownian particle proportional to time;

- Equation for the Brownian particle similar to a diffusion (differential) equation.

Langevin:

- Presented a new derivation of Einstein’s results in 1908;

- First stochastic differential equation, i.e., a differential equation of a “rapidly and irregularly fluctuating random force” (today called a random variable)

- Solutions of differential equation are random functions.

However, no formal mathematical grounding until 1942, when Ito developed stochastic calculus:

- Langevin’s equations interpreted as Ito stochastic differential equations using Ito integrals;

- Ito integral defined to deal with non-differentiable sample paths of random functions;

- Ito lemma (generalized integration rule) used to solve stochastic differential equations.

Note:

- The Markov process is a solution to a simple stochastic differential equation;

- The celebrated Black-Scholes option pricing formula is a stochastic differential equation employing Brownian motion.

The Fokker-Planck Equation: Moving To The Macro View

- The Langevin equation describes the evolution of the position of a single “stochastic particle”;

- The Fokker-Planck equation describes the behavior of a large population of of “stochastic particles”;

- Formally: The Fokker-Planck equation gives the time evolution of the probability density function of the system as a function of time;

- Results can be derived more directly using the Fokker-Planck equation than using the corresponding stochastic differential equation;

- The theory of Markov processes can be developed from this macro point of view.

The Historical Context

Bachelier

- Developed a theory of Brownian motion (Einstein-Wiener process) in 1900 (five years before Einstein, and long before Wiener);

- Was the first person to use a stochastic process to model financial systems;

- Essentially his contribution was forgotten until the late 1950s;

- Black, Scholes and Merton’s publication in 1973 finally gave Brownian motion the break-through in finance.

Planck

- Founder of quantum theory;

- 1900 theory of black-body radiation;

- Central assumption: electromagnetic energy is quantized, E = h v;

- In 1914 Fokker derives an equation on Brownian motion which Planck proves;

- Applies the Fokker-Planck equation as quantum mechanical equation, which turns out to be wrong;

- In 1931 Kolmogorov presented two fundamental equations on Markov processes;

- It was later realized, that one of them was actually equivalent to the Fokker-Planck equation.

Einstein

1905 “Annus Mirabilis” publications. Fundamental paradigm shifts in the understanding of reality:

- Photoelectric effect:

- Explained by giving Planck’s (theoretical) notion of energy quanta a physical reality (photons),

- Further establishing quantum theory,

- Winning him the Nobel Prize;

- Brownian motion:

- First stochastic modeling of natural phenomena,

- The experimental verification of the theory established the existence of atoms, which had been heavily debate at the time,

- Einstein’s most frequently cited paper, in the fields of biology, chemistry, earth and environmental sciences, life sciences, engineering;

- Special theory of relativity: the relative speeds of the observers’reference frames determines the passage of time;

- Equivalence of energy and mass (follows from special relativity): E = m c^2.

Einstein was working at the Patent Office in Bern at the time and submitted his Ph.D. to the University of Zurich in July 1905.

Later Work:

- 1915: general theory of relativity, explaining gravity in terms of the geometry (curvature) of space-time;

- Planck also made contributions to general relativity;

- Although having helped in founding quantum mechanics, he fundamentally opposed its probabilistic implications: “God does not throw dice”;

- Dreams of a unified field theory:

- Spend his last 30 years or so trying to (unsuccessfully) extend the general theory of relativity to unite it with electromagnetism;

- Kaluza and Klein elegantly managed to do this in 1921 by developing general relativity in five space-time dimensions;

- Today there is still no empirically validated theory able to explain gravity and the (quantum) Standard Model of particle physics, despite intense theoretical research (string/M-theory, loop quantum gravity);

- In fact, one of the main goals of the LHC at CERN (officially operational on the 21st of October 2008) is to find hints of such a unified theory (supersymmetric particles, higher dimensions of space).

laws of nature

What are Laws of Nature?

|

- Allow for predictions

- Dependent on only a small set of conditions (i.e., independent of very many conditions which could possibly have an effect)

…but why are there laws of nature and how can these laws be discovered and understood by the human mind?

No One Knows!

- G.W. von Leibniz in 1714 (Principes de la nature et de la grâce):

- Why is there something rather than nothing? For nothingness is simpler and easier than anything

- E. Wigner, “The Unreasonable Effectiveness of Mathematics in the Natural Sciences“, 1960:

- […] the enormous usefulness of mathematics in the natural sciences is something bordering on the mysterious and […] there is no rational explanation for it

- […] it is not at all natural that “laws of nature” exist, much less that man is able to discover them

- […] the two miracles of the existence of laws of nature and of the human mind’s capacity to divine them

- […] fundamentally, we do not know why our theories work so well

In a Nutshell

- We happen to live in a structured, self-organizing, and fine-tuned universe that allows the emergence of sentient beings (anthropic principle)

- The human mind is capable of devising formal thought systems (mathematics)

- Mathematical models are able to capture and represent the workings of the universe

See also this post: in a nutshell.

The Fundamental Level of Reality: Physics

|

- Classical mechanics: invariance of the equations under transformations (e.g., time => conservation of energy)

- Gravitation (general relativity): geometry and the independence of the coordinate system (covariance)

- The other three forces of nature (unified in quantum field theory): mathematics of symmetry and special kind of invariance

See also these posts: funadamental, invariant thinking.

Towards Complexity

- Physics was extremely successful in describing the inanimate world the in the last 300 years or so

- But what about complex systems comprised of many interacting entities, e.g., the life and social sciences?

- The rest is chemistry; C. D. Anderson in 1932; echoing the success of a reductionist approach to understanding the workings of nature after having discovered the positron

- At each stage [of complexity] entirely new laws, concepts, and generalizations are necessary […]. Psychology is not applied biology, nor is biology applied chemistry; P. W. Anderson in 1972; pointing out that the knowledge about the constituents of a system doesn’t reveal any insights into how the system will behave as a whole; so it is not at all clear how you get from quarks and leptons via DNA to a human brain…

Complex Systems: Simplicity

The Limits of Physics

- Closed-form solutions to analytical expressions are mostly only attainable if non-linear effects (e.g., friction) are ignored

- Not too many interacting entities can be considered (e.g., three body problem)

The Complexity of Simple Rules

- S. Wolfram’s cellular automaton rule 110: neither completely random nor completely repetitive

- [The] results [simple rules give rise to complex behavior] where were so surprising and dramatic that as I gradually came to understand them, they forced me to change my whole view of science […]; S. Wolfram reminiscing on his early work on cellular automaton in the 80s (”New Kind of Science”, pg. 19)

Complex Systems: The Paradigm Shift

- The interaction of entities (agents) in a system according to simple rules gives rise to complex behavior

- The shift from mathematical (analytical) models to algorithmic computations and simulations performed in computers (only this bottom-up approach to simulating complex systems has been fruitful, all top-down efforts have failed: try programming swarming behavior, ant foraging, pedestrian/traffic dynamics,… not using simple local interaction rules but with a centralized, hierarchical setup!)

- Understanding the complex system as a network of interactions (graph theory), where the complexity (or structure) of the individual nodes can be ignored

- Challenge: how does the macro behavior emerge from the interaction of the system elements on the micro level?

Laws of Nature Revisited

|

- Yes: scaling (or power) laws

- Complex, collective phenomena give rise to power laws […] independent of the microscopic details of the phenomenon. These power laws emerge from collective action and transcend individual specificities. As such, they are unforgeable signatures of a collective mechanism; J.P. Bouchaud in “Power-laws in Economy and Finance: Some Ideas from Physics“, 2001

Scaling Laws

Scaling-law relations characterize an immense number of natural patterns (from physics, biology, earth and planetary sciences, economics and finance, computer science and demography to the social sciences) prominently in the form of

- scaling-law distributions

- scale-free networks

- cumulative relations of stochastic processes

A scaling law, or power law, is a simple polynomial functional relationship

f(x) = a x^k <=> Y = (X/C)^E

Scaling laws

- lack a preferred scale, reflecting their (self-similar) fractal nature

- are usually valid across an enormous dynamic range (sometimes many orders of magnitude)

See also these posts: scaling laws, benford’s law.

Scaling Laws In FX

- Event counts related to price thresholds

- Price moves related to time thresholds

- Price moves related to price thresholds

- Waiting times related to price thresholds

Scaling Laws In Biology

So-called allometric laws describe the relationship between two attributes of living organisms as scaling laws:

- The metabolic rate B of a species is proportional to its mass M: B ~ M^(3/4)

- Heartbeat (or breathing) rate T of a species is proportional to its mass: T ~ M^(-1/4)

- Lifespan L of a species is proportional to its mass: L ~ M^(1/4)

- Invariants: all species have the same number of heart beats in their lifespan (roughly one billion)

(Fig. G. West)

G. West (et. al) proposes an explanation of the 1/4 scaling exponent, which follow from underlying principles embedded in the dynamical and geometrical structure of space-filling, fractal-like, hierarchical branching networks, presumed optimized by natural selection: organisms effectively function in four spatial dimensions even though they physically exist in three.

Conclusion

- The natural world possesses structure-forming and self-organizing mechanisms leading to consciousness capable of devising formal thought systems which mirror the workings of the natural world

- There are two regimes in the natural world: basic fundamental processes and complex systems comprised of interacting agents

- There are two paradigms: analytical vs. algorithmic (computational)

- There are ‘miracles’ at work:

- the existence of a universe following laws leading to stable emergent features

- the capability of the human mind to devise formal thought systems

- the overlap of mathematics and the workings of nature

- the fact that complexity emerges from simple rules

- There are basic laws of nature to be found in complex systems, e.g., scaling laws

animal intelligence

This is the larger lesson of animal cognition research: It humbles us.

We are not alone in our ability to invent or plan or to contemplate ourselves—or even to plot and lie.

Many scientists believed animals were incapable of any thought. They were simply machines, robots programmed to react to stimuli but lacking the ability to think or feel.

We’re glimpsing intelligence throughout the animal kingdom.

Copyright Vincent J. Musi, National Geographic

A dog with a vocabulary of 340 words. A parrot that answers “shape” if asked what is different, and “color” if asked what is the same, while being showed two items of different shape and same color. An octopus with “distinct personality” that amuses itself by shooting water at plastic-bottle targets (the first reported invertebrate play behavior). Lemurs with calculatory abilities. Sheep able to recognize faces (of other sheep and humans) long term and that can discern moods. Crows able to make and use tools (in tests, even out of materials never seen before). Human-dolphin communication via an invented sign language (with simple grammar). Dolphins ability to correctly interpret on the first occasion instructions given by a person displayed on a TV screen.

We’re glimpsing intelligence throughout the animal kingdom.

Copyright Vincent J. Musi, National Geographic

A dog with a vocabulary of 340 words. A parrot that answers “shape” if asked what is different, and “color” if asked what is the same, while being showed two items of different shape and same color. An octopus with “distinct personality” that amuses itself by shooting water at plastic-bottle targets (the first reported invertebrate play behavior). Lemurs with calculatory abilities. Sheep able to recognize faces (of other sheep and humans) long term and that can discern moods. Crows able to make and use tools (in tests, even out of materials never seen before). Human-dolphin communication via an invented sign language (with simple grammar). Dolphins ability to correctly interpret on the first occasion instructions given by a person displayed on a TV screen.

This may only be the tip of the iceberg…

Read the article Animal Minds in National Geographic`s March 2008 edition.

Ever think about vegetarianism?

output…

- Lecture slides prepared for the university of Essex working group:Stylized Facts in FX

- Talks given at the annual meeting of the German Physical Society, Berlin, Germany:

- The Backbone of Control, Working Group Physics of Socio-Economic Systems (AKSOE): Social, Information- and Production Networks, 27th of February 2008,

- Global Ownership: Unveiling the Structures of Real-World Complex Networks, Section Dynamics and Statistical Physics (DY): Statistical Physics of Complex Networks, 25th of February 2008,

- Talk given with Adrian Plattner at a monthly meeting of the Lions Club in Basel, Switzerland, 15th of April 2008:

- Introducing the Swiss aid organization noon.ch: PDF of talk

quotes

- 1932: “The rest is chemistry.”; C. D. Anderson; echoing the success of a reductionist approach to understanding the workings of nature after having discovered the positron.

- 1972: “At each stage [of complexity] entirely new laws, concepts, and generalizations are necessary […]. Psychology is not applied biology, nor is biology applied chemistry.”; P. W. Anderson; pointing out that the knowledge about the constituents of a system doesn’t reveal any insights into how the system will behave as a whole, e.g., life, consciousness, …; quote from a Science publication called “More is Different” (Vol. 177, No. 4047).

- 1980: “…I want to discuss the possibility that the goal of theoretical physics [finding a complete, consistent and unified theory of physical interactions describing all physical observations] might be achieved in the not too distant future say, by the end of the century.”; S. Hawking; at the time, 11-dimensional supergravity looked like an exciting candidate, only to be succeeded by superstring and M-theory, and rivaled by loop quantum gravity - to date there is no empirical evidence validating or falsifying these mathematical formalisms; quote from his inaugural lecture as the Lucasian Professor of mathematics at Cambridge.

- 2000: “I think the next century will be the century of complexity.”; S. Hawking; after the last century was arguably the century of the quantum; quote from a newspaper interview.

complex networks

The study of complex networks was sparked at the end of the 90s with two seminal papers, describing their universal:

- small-worlds property [1],

- and scale-free nature [2] (see also this older post: scaling laws).

Today, networks are ubiquitous: phenomena in the physical world (e.g., computer networks, transportation networks, power grids, spontaneous synchronization of systems of lasers), biological systems (e.g., neural networks, epidemiology, food webs, gene regulation), and social realms (e.g., trade networks, diffusion of innovation, trust networks, research collaborations, social affiliation) are best understood if characterized as networks.

The explosion of this field of research was and is coupled with the increasing availability of

- huge amounts of data, pouring in from neurobiology, genomics, ecology, finance and the Word-wide Web, …,

- computing power and storage facilities.

Paradigm

The new paradigm states that it is best to understand a complex system, if it is mapped to a network. I.e., the links represent the some kind of interaction and the nodes are stripped of any intrinsic quality. So, as an example, you can forget about the complexity of the individual bird, if you model the flocks swarming behavior. (See these older posts: complex, fundamental, swarm theory, in a nutshell.)

Weights

Only in the last years has the attention shifted from this topological level of analysis (either links are present or not) to incorporate weights of links, giving the strength relative to each other. Albeit being harder to tackle, these networks are closer to the real-world system it is modeling.

Nodes

However, there is still one step missing: also the vertices of the network can be assigned with a value, which acts as a proxy for some real-world property that is coded into the network structure.

The two plots above illustrate the difference if the same network is visualized [3] using weights and values assigned to the vertices (left) or simply plotted as a binary (topological) network (right)…

References

[1] Strogatz S. H. and Watts D. J., 1998, Collective Dynamics of ‘Small-World’ Networks,

Nature, 393, 440–442.

Nature, 393, 440–442.

[2] Albert R. and Barabasi A.-L., 1999, Emergence of Scaling in Random Networks, www.arXiv.org/abs/cond-mat/9910332.

[3] Cuttlefish Adaptive NetWorkbench and Layout Algorithm:sourceforge.net/projects/cuttlefish/

Tags:

cool links…

think statistics are boring, irrelevant and hard to understand? well, think again.

two examples of visually displaying important information in an amazingly cool way:

snapshots

territory size shows the proportion of all people living on less than or equal to US$1 in purchasing power parity a day.

worldmapper.org displays a large collection of world maps, where territories are re-sized on each map according to the subject of interest. sometimes an image says more than a thousand words…

information:

- about worldmapper.org

- the UC Atlas of Global Inequality

- the algorithm for resizing areas comes from the complex networks community: Diffusion-based method for producing density equalizing maps

M. T. Gastner, M. E. J. Newman - Newman’s homepage

evolution

want to see the global evolution of life expectancy vs. income per capita from 1975 to 2003? and additionally display the co2 emission per capita? choose indicators from areas as diverse as internet users per 1′000 people to contraceptive use amongst adult women and watch the animation.

gapminder is a fantastic tool that really makes you think…

information:

- the gapminder.org homepage

- h. rosling is the director of the gapminder foundation

- watch him give two inspiring, unusual and tantalizing talks using the gapminder tool: debunking myth about-the third world; 2006 and the seemingly impossible is possible; 2007

tags:

work in progress…

Some of the stuff I do all week…

Complex Networks

Visualizing a shareholder network:

The underlying network visualization framework is JUNG, with theCuttlefish adaptive networkbench and layout algorithm (coming soon). The GUI uses Swing.

Stochastic Time Series

Scaling laws in financial time series:

A Java framework allowing the computation and visualization of statistical properties. The GUI is programmed using SWT.

tags: complex networks, visualization, time series, finance, stochastic,scaling laws, computers, java, gui

plugin of the month

The Firefox add-on Gspace allows you to use Gmail as a file server:

This extension allows you to use your Gmail Space (4.1 GB and growing) for file storage. It acts as an online drive, so you can upload files from your hard drive and access them from every Internet capable system. The interface will make your Gmail account look like a FTP host.

This extension allows you to use your Gmail Space (4.1 GB and growing) for file storage. It acts as an online drive, so you can upload files from your hard drive and access them from every Internet capable system. The interface will make your Gmail account look like a FTP host.

tech dependence…

Because technological advancement is mostly quite gradual, one hardly notices it creeping into ones life. Only if you would instantly remove these high tech commodities, you’d realize how dependent one has become.

A random list of ‘nonphysical’ things I wouldn’t want to live without anymore:

A random list of ‘nonphysical’ things I wouldn’t want to live without anymore:

Internet

- wikipedia.org: everything you ever wanted to know — and much more

- google.com (e.g., news, scholar, maps, webmaster tools, …): basically the internet;-)

- Web 2.0 communities (e.g., youtube.com, facebook.com, myspace.com, linkedin.com, twitter.com, del.icio.us, …): your virtual social network

- dict.leo.org: towards the babel fish

- amazon.com: recommendations from the fat tail of the probability distribution

Tools

- Web browsers (e.g., Firefox): your window to the world

- Version control systems (e.g., Subversion): get organized

- CMS (e.g., TYPO3): disentangle content from design on your web page and more

- LaTeX typesetting software (btw, this is not a fetish;-): the only sensible and aesthetic way to write scientific documents

- Wikies: the wonderful world of unstructured collaboration

- Blogs: get it out there

- Java programming language: truly platform independent and with nice GUI toolkits (SWT, Swing, GWT); never want to go back to C++ (and don’t even mention C# or .net)

- Eclipse IDE: how much fun can you have while programming?

- MySQL: your very own relational database (the next level: db4o)

- PHP: ok, Ruby is perhaps cooler, but PHP is so easy to work with (e.g., integrating MySQL and web stuff)

- Dynamic DNS (e.g., dyndns.com): let your home computer be a node of the internet

- Web server (e.g., Apache 2): open the gateway

- CSS: ok, if we have to go with HTML, this helps a lot

- VoIP (e.g., Skype): use your bandwidth

- P2P (e.g., BitTorrent): pool your network

- Video and audio compression (e.g., MPEG, MP3, AAC, …): information theory at its best

- Scientific computing (R, Octave, gnuplot, …): let your computer do the work

- Open source licenses (Creative Commons, Apache, GNU GPL, …): the philosophy!

- Object-oriented programming paradigm: think design patterns

- Rich Text editors: online WYSIWYG editing, no messing around with HTML tags

- SSH network protocol: secure and easy networking

- Linux Shell-Programming (”grep”, “sed”, “awk”, “xargs”, pipes, …): old school Unix from the 70s

- E-mail (e.g., IMAP): oops, nearly forgot that one (which reminds me of something i really, really could do without:

spam) - Graylisting: reduce spam

GNU/Linux

- Debian (e.g., Kubuntu): the basis for it all

- apt-get package management system: a universe of software at your fingertips

- Compiz Fusion window manager: just to be cool…

It truly makes one wonder, how all this cool stuff can come for free!!!

tags: internet, web technologies, computers, open source, linux, web 2.0, social networks, programming

climate change 2007

Confused about the climate? Not sure what’s happening? Exaggerated fears or impending cataclysm?

A good place to start is a publication by Swiss Re. It is done in a straightforward, down-to-earth, no-bullshit and sane manner. The source to the whole document is given at the bottom.

Executive Summary

Executive Summary

The Earth is getting warmer, and it is a widely held view in the scientific community that much of the recent warming is due to human activity. As the Earth warms, the net effect of unabated climate change will ultimately lower incomes and reduce public welfare. Because carbon dioxide (CO₂) emissions build up slowly, mitigation costs rise as time passes and the level of CO₂ in the atmosphere increases. As these costs rise, so too do the benefits of reducing CO₂ emissions, eventually yielding net positive returns. Given how CO₂ builds up and remains in the atmosphere, early mitigation efforts are highly likely to put the global economy on a path to achieving net positive benefits sooner rather than later. Hence, the time to act to reduce these emissions is now.The climate is what economists call a “public good”: its benefits are available to everyone and one person’s enjoyment and use of it does not affect another’s. Population growth, increased economic activity and the burning of fossil fuels now pose a threat to the climate. The environment is a free resource, vulnerable to overuse, and human activity is now causing it to change. However, no single entity is responsible for it or owns it. This is referred to as the “tragedy of the commons”: everyone uses it free of charge and eventually depletes or damages it. This is why government intervention is necessary to protect our climate.

Climate is global: emissions in one part of the world have global repercussions. This makes an international government response necessary. Clearly, this will not be easy. The Kyoto Protocol for reducing CO₂ emissions has had some success, but was not considered sufficiently fair to be signed by the United States, the country with the highest volume of CO₂ emissions. Other voluntary agreements, such as the Asia-Pacific Partnership on Clean Development and Climate – which was signed by the US – are encouraging, but not binding. Thus, it is essential that governments implement national and international mandatory policies to effectively reduce carbon emissions in order to ensure the well-being of future generations.

The pace, extent and effects of climate change are not known with certainty. In fact, uncertainty complicates much of the discussion about climate change. Not only is the pace of future economic growth uncertain, but also the carbon dioxide and equivalent (CO₂e) emissions associated with economic growth. Furthermore, the global warming caused by a given quantity of CO₂e emissions is also uncertain, as are the costs and impact of temperature increases.

Though uncertainty is a key feature of climate change and its impact on the global economy, this cannot be an excuse for inaction. The distribution and probability of the future outcomes of climate change are heavily weighted towards large losses in global welfare. The likelihood of positive future outcomes is minor and heavily dependent upon an assumed maximum climate change of 2° Celsius above the pre-industrial average. The probability that a “business as usual” scenario – one with no new emission-mitigation policies – will contain global warming at 2° Celsius is generally considered as negligible. Hence, the “precautionary principle” – erring on the safe side in the face of uncertainty – dictates an immediate and vigorous global mitigation strategy for reducing CO₂e emissions.

There are two major types of mitigation strategies for reducing greenhouse gas emissions: a cap-and-trade system and a tax system. The cap-and-trade system establishes a quantity target, or cap, on emissions and allows emission allocations to be traded between companies, industries and countries. A tax on, for example, carbon emissions could also be imposed, forcing companies to internalize the cost of their emissions to the global climate and economy. Over time, quantity targets and carbon taxes would need to become increasingly restrictive as targets fall and taxes rise. Though both systems have their own merits, the cap-and-trade policy has an edge over the carbon tax, given the uncertainty about the costs and benefits of reducing emissions. First, cap-and-trade policies rely on market mechanisms – fluctuating prices for traded emissions – to induce appropriate mitigating strategies, and have proved effective at reducing other types of noxious gases. Second, caps have an economic advantage over taxes when a given level of emissions is required. There is substantial evidence that emissions need to be capped to restrict global warming to 2 °C above preindustrial levels or a little more than 1 °C compared to today. Given that the stabilization of emissions at current levels will most likely result in another degree rise in temperature and that current economic growth is increasing emissions, the precautionary principle supports a cap-and-trade policy. Finally, cap-and-trade policies are more politically feasible and palatable than carbon taxes. They are more widely used and understood and they do not require a tax increase. They can be implemented with as much or as little revenue-generating capacity as desired. They also offer business and consumers a great deal of choice and flexibility. A cap-and-trade policy should be easier to adopt in a wide variety of political environments and countries.

Whichever system – cap-and-trade or carbon tax – is adopted, there are distributional issues that must be addressed. Under a quantity target, allocation permits have value and can be granted to businesses or auctioned. A carbon tax would raise revenues that could be recycled, for example, into research on energy-efficient technologies. Or the revenues could be used to offset inefficient taxes or to reduce the distributional aspects of the carbon tax.

Source: “The economic justification for imposing restraints on carbon emissions”, Swiss Re, Insights, 2007; PDF

scaling laws

Scaling-law relations characterize an immense number of natural processes, prominently in the form of

- scaling-law distributions,

- scale-free networks,

- cumulative relations of stochastic processes.

A scaling law, or power law, is a simple polynomial functional relationship, i.e., f(x) depends on a power of x. Two properties of such laws can easily be shown:

- a logarithmic mapping yields a linear relationship,

- scaling the function’s argument x preserves the shape of the functionf(x), called scale invariance.

See (Sornette, 2006).

Scaling-Law Distributions

Scaling-law distributions have been observed in an extraordinary wide range of natural phenomena: from physics, biology, earth and planetary sciences, economics and finance, computer science and demography to the social sciences; see (Newman, 2004). It is truly amazing, that such diverse topics as

- the size of earthquakes, moon craters, solar flares, computer files, sand particle, wars and price moves in financial markets,

- the number of scientific papers written, citations received by publications, hits on webpages and species in biological taxa,

- the sales of music, books and other commodities,

- the population of cities,

- the income of people,

- the frequency of words used in human languages and of occurrences of personal names,

- the areas burnt in forest fires,

are all described by scaling-law distributions. First used to describe the observed income distribution of households by the economist Pareto in 1897, the recent advancements in the study of complex systems have helped uncover some of the possible mechanisms behind this universal law. However, there is as of yet no real understanding of the physical processes driving these systems.

Processes following normal distributions have a characteristic scale given by the mean of the distribution. In contrast, scaling-law distributions lack such a preferred scale. Measurements of scaling-law processes yield values distributed across an enormous dynamic range (sometimes many orders of magnitude), and for any section one looks at, the proportion of small to large events is the same. Historically, the observation of scale-free or self-similar behavior in the changes of cotton prices was the starting point for Mandelbrot’s research leading to the discovery of fractal geometry; see (Mandelbrot, 1963).

It should be noted, that although scaling laws imply that small occurrences are extremely common, whereas large instances are quite rare, these large events occur nevertheless much more frequently compared to a normal (or Gaussian) probability distribution. For such distributions, events that deviate from the mean by, e.g., 10 standard deviations (called “10-sigma events”) are practically impossible to observe. For scaling law distributions, extreme events have a small but very real probability of occurring. This fact is summed up by saying that the distribution has a “fat tail” (in the terminology of probability theory and statistics, distributions with fat tails are said to be leptokurtic or to display positive kurtosis) which greatly impacts the risk assessment. So although most earthquakes, price moves in financial markets, intensities of solar flares, … will be very small, the possibility that a catastrophic event will happen cannot be neglected.

Scale-Free Networks

Another modern research field marked by the ubiquitous appearance of scaling-law relations is the study of complex networks. Many different phenomena in the physical (e.g., computer networks, transportation networks, power grids, spontaneous synchronization of systems of lasers), biological (e.g., neural networks, epidemiology, food webs, gene regulation), and social (e.g., trade networks, diffusion of innovation, trust networks, research collaborations, social affiliation) worlds can be understood as network based. In essence, the links and nodes are abstractions describing the system under study via the interactions of the elements comprising it.

In graph theory, the degree of a node (or vertex), k, describes the number of links (or edges) the node has to other nodes. The degree distribution gives the probability distribution of degrees in a network. For scale-free networks, one finds that the probability that a node in the network connects with k other nodes follows a scaling law. Again, this power law is characterized by the existence of highly connected hubs, whereas most nodes have small degrees.

Scale-free networks are

- characterized by high robustness against random failure of nodes, but susceptible to coordinated attacks on the hubs, and

- thought to arise from a dynamical growth process, called preferential attachment, in which new nodes favor linking to existing nodes with high degrees.

It should be noted, that another prominent feature of real-world networks, namely the so-called small-world property, is separate from a scale-free degree distribution, although scale-free networks are also small-world networks; (Strogatz and Watts, 1998). For small-world networks, although most nodes are not neighbors of one another, most nodes can be reached from every other by a surprisingly small number of hops or steps.

Most real-world complex networks - such as those listed at the beginning of this section - show both scale-free and small-world characteristics.

Some general references include (Barabasi, 2002), (Albert and Barabasi, 2001), and (Newman, 2003). Emergence of scale-free networks in the preferential attachment model (Albert and Barabasi, 1999). An alternative explanation to preferential attachment, introducing non-topological values (called fitness) to the vertices, is given in (Caldarelli et al., 2002).

Cumulative Scaling-Law Relations

Next to distributions of random variables, scaling laws also appear in collections of random variables, called stochastic processes. Prominent empirical examples are financial time-series, where one finds empirical scaling laws governing the relationship between various observed quantities. See (Guillaume et al., 1997) and (Dacorogna et al., 2001).

References

Albert R. and Barabasi A.-L., 1999, Emergence of Scaling in Random Networks, http://www.arXiv.org/abs/cond-mat/9910332.

Albert R. and Barabasi A.-L., 2001, Statistical Mechanics of Complex Networks, http://www.arXiv.org/abs/cond-mat/0106096.

Barabasi A.-L., 2002, Linked — The New Science of Networks, Perseus Publishing, Cambridge, Massachusetts.

Caldarelli G., Capoccio A., Rios P. D. L., and Munoz M. A., 2002, Scale- free Networks without Growth or Preferential Attachment: Good get Richer, http://www.arXiv.org/abs/cond-mat/0207366.

Dacorogna M. M., Gencay R., Müller U. A., Olsen R. B., and Pictet O. V., 2001, An Introduction to High-Frequency Finance, Academic Press, San Diego, CA.

Guillaume D. M., Dacorogna M. M., Dave R. D., Müller U. A., Olsen R. B., and Pictet O. V., 1997, From the Bird’s Eye to the Microscope: A Survey of New Stylized Facts of the Intra-Daily Foreign Exchange Markets, Finance and Stochastics, 1, 95–129.

Mandelbrot B. B., 1963, The variation of certain speculative prices, Journal of Business, 36, 394–419.

Newman M. E. J., 2003, The Structure and Function of Complex Networks, http://www.arXiv.org/abs/cond-mat/0303516.

Newman M. E. J., 2004, Power Laws, Pareto Distributions and Zipf ’s Law, http://www.arXiv.org/abs/cond-mat/0412004.

Sornette D., 2006, Critical Phenomena in Natural Sciences, Series in Synergetics. Springer, Berlin, 2nd edition.

Strogatz S. H. and Watts D. J., 1998, Collective Dynamics of ‘Small-World’ Networks,

Nature, 393, 440–442.

Nature, 393, 440–442.

See also this post: laws of nature.

tags: scaling law, power law, scale-free network, probability distribution, stochastic process, scale invariance

swarm theory

National Geographic`s July 2007 edition: Swarm Theory

A single ant or bee isn’t smart, but their colonies are. The study of swarm intelligence is providing insights that can help humans manage complex systems.

benford’s law

In 1881 a result was published, based on the observation that the first pages of logarithm books, used at that time to perform calculations, were much more worn than the other pages. The conclusion was that computations of numbers that started with 1 were performed more often than others: if d denotes the first digit of a number the probability of its appearance is equal to log(d + 1).

The phenomenon was rediscovered in 1938 by the physicist F. Benford, who confirmed the “law” for a large number of random variables drawn from geographical, biological, physical, demographical, economical and sociological data sets. It even holds for randomly compiled numbers from newspaper articles. Specifically, Benford’s law, or the first-digit law, states, that the occurrence of a number with first digit 1 is with 30.1%, 2 with 17.6%, 3 with 12.5%, 4 with 9.7%, 5 with 7.9%, 6 with 6.7%, 7 with 5.8%, 8 with 5.1% and 9 with 4.6% probability. In general, the leading digit d ∈ [1, …, b−1] in base b ≥ 2 occurs with probability proportional to log_b(d + 1) − log_b(d) = log_b(1 + 1/d).

The phenomenon was rediscovered in 1938 by the physicist F. Benford, who confirmed the “law” for a large number of random variables drawn from geographical, biological, physical, demographical, economical and sociological data sets. It even holds for randomly compiled numbers from newspaper articles. Specifically, Benford’s law, or the first-digit law, states, that the occurrence of a number with first digit 1 is with 30.1%, 2 with 17.6%, 3 with 12.5%, 4 with 9.7%, 5 with 7.9%, 6 with 6.7%, 7 with 5.8%, 8 with 5.1% and 9 with 4.6% probability. In general, the leading digit d ∈ [1, …, b−1] in base b ≥ 2 occurs with probability proportional to log_b(d + 1) − log_b(d) = log_b(1 + 1/d).

First explanations of this phenomena, which appears to suspend the notions of probability, focused on its logarithmic nature which implies a scale-invariant or power-law distribution. If the first digits have a particular distribution, it must be independent of the measuring system, i.e., conversions from one system to another don’t affect the distribution. (This requirement that physical quantities are independent of a chosen representation is one of the cornerstones of general relativity and called covariance.) So the common sense requirement that the dimensions of arbitrary measurement systems shouldn’t affect the measured physical quantities, is summarized in Benford’s law. In addition, the fact that many processes in nature show exponential growth is also captured by the law, which assumes that the logarithms of numbers are uniformly distributed.

So how come one observes random variables following normal and scaling-law distributions? In 1996 the phenomena was mathematically rigorously proven: if one repeatedly chooses different probability distribution and then randomly chooses a number according to that distribution, the resulting list of numbers will obey Benford’s law. Hence the law reflects the behavior of distributions of distributions.

Benford’s law has been used to detect fraud in insurance, accounting or expenses data, where people forging numbers tend to distribute their digits uniformly.

tags: benford, probability

infinity?

There is an interesting observation or conjecture to be made from the Mataphysics Map in the post what can we know?, concerning the nature of infinity.

The Finite

Many observations reveal a finite nature of reality:

- Energy comes in finite parcels (quatum mechanics)

- The knowledge one can have about quanta is a fixed value (uncertainty)

- Energy is conserved in the universe

- The speed of light has the same constant value for all observers (special relativity)

- The age of the universe is finite

- Information is finite and hence can be coded into a binary language

Newer and more radical theories propose:

- Space comes in finite parcels

- Time comes in finite parcels

- The universe is spatially finite

- The maximum entropy in any given region of space is proportional to the regions surface area and not its volume (this leads to the holographic principle stating that our three dimensional universe is a projection of physical processes taking place on a two dimensional surface surrounding it)

So finiteness appears to be an intrinsic feature of the Outer Reality box of the diagram.

There is in fact a movement in physics ascribing to the finiteness of reality, called Digital Philosophy. Indeed, this finiteness postulate is a prerequisite for an even bolder statement, namely, that the universe is one gigantic computer (a Turing complete cellular automata), where reality (thought and existence) is equivalent to computation. As mentioned above, the selforganizing structure forming evolution of the universe can be seen to produce ever more complex modes of information processing (e.g., storing data in DNA, thoughts, computations, simulations and perhaps, in the near future, quantum computations).

There is also an approach to quantum mechanics focussing on information stating that an elementary quantum system carries (is?) one bit of information. This can be seen to lead to the notions of quantisation, uncertainty and entanglement.

The Infinite

It should be noted that zero is infinity in disguise. If one lets the denominator of a fraction go to infinity, the result is zero. Historically, zero was discovered in the 3rd century BC in India and was introduced to the Western world by Arabian scholars in the 10th century AC. As ordinary as zero appears to us today, the great Greek mathematicians didn’t come up with such a concept.

Indeed, infinity is something intimately related to formal thought systems (mathematics). Irrational numbers have an infinite number of digits. There are two measures for infinity: countability and uncountablility. The former refers to infinite series as 1, 2, 3, … Whereas for the latter measure, starting from 1.0 one can’t even reach 1.1 because there are an infinite amount of numbers in the interval between 1.0 and 1.1. In geometry, points and lines are idealizations of dimension zero and one, respectively.

So it appears as though infinity resides only in the Inner Reality box of the diagram.

The Interface

If it should be true that we live in a finite reality with infinity only residing within the mind as a concept, then there should be some problems if one tries to model this finite reality with an infinity-harboring formalism.

Perhaps this is indeed so. In chaos theory, the sensitivity to initial conditions (butterfly effect) can be viewed as the problem of measuring numbers: the measurement can only have a finite degree of accuracy, whereas the numbers have, in principle, an infinite amount of decimal places.

In quatum gravity (the, as yet, unsuccessful merger of quantum mechanics and gravity) many of the inherent problems of the formalism could by bypassed, when a theory was proposed (string theory) that replaced (zero space) point particles with one dimensionally extended objects. Later incarnations, called M-theory, allowed for multidimensional objects.

In the above mentioned information based view of quantum mechanics, the world appears quantised because the information retrieved by our minds about the world is inevitably quantised.

So the puzzle deepens. Why do we discover the notion of infinity in our minds while all our experiences and observations of nature indicate finiteness?

medical studies

introduction

medical studies often contradict each other. results claiming to have “proven” some causal connection are confronted with results claiming to have “disproven” the link, or vice versa. this dilemma affects even reputable scientists publishing in leading medical journals. the topics are divers:

- high-voltage power supply lines and leukemia [1],

- salt and high blood pressure [1],

- heart diseases and sport [1],

- stress and breast cancer [1],

- smoking and breast cancer [1],

- praying and higher chances of healing illnesses [1],

- the effectiveness of homeopathic remedies and natural medicine,

- vegetarian diets and health,

- low frequency electromagnetic fields and electromagnetic hypersensitivity [2],

- …

basically, this is understood to happen for three reasons:

- i.) the bias towards publishing positive results,

- ii.) incompetence in applying statistics,

- ii.) simple fraud.

i.)

publish or perish. in order the guarantee funding and secure the academic status quo, results are selected by their chance of being published.

an independent analysis of the original data used in 100 published studies exposed that roughly half of them showed large discrepancies in the original aims stated by the researchers and the reported findings, implying that the researchers simply skimmed the data for publishable material [3].

this proves fatal in combination with ii.) as every statistically significant result can occur (per definition) by chance in an arbitrary distribution of measured data. so if you only look long enough for arbitrary results in your data, you are bound to come up with something [1].

ii.)

often, due to budget reasons, the numbers of test persons for clinical trials are simply too small to allow for statistical relevance. ref. [4] showed next to other things, that the smaller the studies conducted in a scientific field, the less likely the research findings are to be true.

statistical significance - often evaluated by some statistics software package - is taken as proof without considering the plausibility of the result. many statistically significant results turn out to be meaningless coincidences after accounting for the plausibility of the finding [1].

one study showed that one third of frequently cited results fail a later verification [1].

another study documented that roughly 20% of the authors publishing in the magazine “nature” didn’t understand the statistical method they were employing [5].

iii.) a.)

two thirds of of the clinical biomedical research in the usa is supported by the industry - double as much as in 1980 [1].

it was shown that in 1000 studies done in 2003, the nature of the funding correlated with the results: 80% of industry financed studies had positive results, whereas only 50% of the independent research reported positive findings.

it could be argued that the industry has a natural propensity to identify effective and lucrative therapies. however, the authors show that many impressive results were only obtained because they were compared with weak alternative drugs or placebos. [6]

iii.) b.)

quoted from wikipedia.org:

“Andrew Wakefield (born 1956 in the United Kingdom) is a Canadian trained surgeon, best known as the lead author of a controversial 1998 research study, published in the Lancet, which reported bowel symptoms in a selected sample of twelve children with autistic spectrum disorders and other disabilities, and alleged a possible connection with MMR vaccination. Citing safety concerns, in a press conference held in conjunction with the release of the report Dr. Wakefield recommended separating the components of the injections by at least a year. The recommendation, along with widespread media coverage of Wakefield’s claims was responsible for a decrease in immunisation rates in the UK. The section of the paper setting out its conclusions, known in the Lancet as the “interpretation” (see the text below), was subsequently retracted by ten of the paper’s thirteen authors.

[…]

In February of 2004, controversy resurfaced when Wakefield was accused of a conflict of interest. The London Sunday Times reported that some of the parents of the 12 children in the Lancet study were recruited via a UK attorney preparing a lawsuit against MMR manufacturers, and that the Royal Free Hospital had received £55,000 from the UK’s Legal Aid Board (now the Legal Services Commission) to pay for the research. Previously, in October 2003, the board had cut off public funding for the litigation against MMR manufacturers. Following an investigation of The Sunday Times allegations by the UK General Medical Council, Wakefield was charged with serious professional misconduct, including dishonesty, due to be heard by a disciplinary board in 2007.

In December of 2006, the Sunday Times further reported that in addition to the money given to the Royal Free Hospital, Wakefield had also been personally paid £400,000 which had not been previously disclosed by the attorneys responsible for the MMR lawsuit.”

wakefield had always only expressed his criticism of the combined triple vaccination, supporting single vaccinations spaced in time. the british tv station channel 4 exposed in 2004 that he had applied for patents for the single vaccines. wakefield dropped his subsequent slander action against the media company only in the beginning of 2007. as mentioned, he now awaits charges for professional misconduct. however, he has left britain and now works for a company in austin texas. it has been uncovered that other employees of this us company had received payments from the same attorney preparing the original law suit. [7]

update: december 2007: slashdot: “YouTube Breeding Harmful Scientific Misinformation”.

epilog

should we be surprised by all of this? next to the innate tendency of human beings to be incompetent and unscrupulous, there is perhaps another level, that makes this whole endeavor special.

the inability of scientist to conclusively and reproducibly uncover findings concerning human beings is maybe better appreciated, if one considers the nature of the subject under study. life, after all, is an enigma and the connection linking the mind to matter is elusive at best (i.e., the physical basis of consciousness).

the bodies capability to heal itself, i.e., the placebo effect and the need for double-blind studies is indeed very bizarre. however, there are studies questioning, if the effect exists at all;-)

taken from

http://j-node.homeip.net/essays/science/medical-studies/ (consult also for the corresponding links for the sources cited below)

http://j-node.homeip.net/essays/science/medical-studies/ (consult also for the corresponding links for the sources cited below)

—

[1] This article in the magazine issued by the Neue Zürcher Zeitung by Robert Matthews

[2] C. Schierz; Projekt NEMESIS; ETH Zürich; 2000

[3] A. Chan (Center of Statistics in Medicine, Oxford) et. al.; Journal of the American Medical Association; 2004

[4] J. Ioannidis; “Why Most Published Research Findings Are False” ; University of Ioannina; 2005

[5] R. Matthews, E. García-Berthou and C. Alcaraz as reported in this “Nature” article; 2005

[6] C. Gross (Yale University School of Medicine) et. al.; “Scope and Impact of Financial Conflicts of Interest in Biomedical Research “; Journal of the American Medical Association; 2003

[7] H. Kaulen; “Wie ein Impfstoff zu Unrecht in Misskredit gebracht wurde”; Deutsches Ärzteblatt; Jg. 104; Heft 4; 26. Januar 2007

[2] C. Schierz; Projekt NEMESIS; ETH Zürich; 2000

[3] A. Chan (Center of Statistics in Medicine, Oxford) et. al.; Journal of the American Medical Association; 2004

[4] J. Ioannidis; “Why Most Published Research Findings Are False” ; University of Ioannina; 2005

[5] R. Matthews, E. García-Berthou and C. Alcaraz as reported in this “Nature” article; 2005

[6] C. Gross (Yale University School of Medicine) et. al.; “Scope and Impact of Financial Conflicts of Interest in Biomedical Research “; Journal of the American Medical Association; 2003

[7] H. Kaulen; “Wie ein Impfstoff zu Unrecht in Misskredit gebracht wurde”; Deutsches Ärzteblatt; Jg. 104; Heft 4; 26. Januar 2007

in a nutshell

Introduction

- i.) analyzing the nature of the object being considered/observed,

- ii.) developing the formal representation of the object’s features and its dynamics/interactions,

- iii.) devising methods for the empirical validation of the formal representations.

To be precise, level i.) lies more within the realm of philosophy (e.g., epistemology) and metaphysics (i.e., ontology), as notions of origin, existence and reality appear to transcend the objective and rational capabilities of thought. The main problem being:

“Why is there something rather than nothing? For nothingness is simpler and easier than anything.”; [1].

“Why is there something rather than nothing? For nothingness is simpler and easier than anything.”; [1].

In the history of science the above mentioned formulation made the understanding of at least three different levels of reality possible:

- a.) the fundamental level of the natural world,

- b.) inherently random phenomena,

- c.) complex systems.

While level a.) deals mainly with the quantum realm and cosmological structures, levels b.) and c.) are comprised mostly of biological, social and economic systems.

Examples

a.) Fundamental

Many natural sciences focus on a.i.) fundamental, isolated objects and interactions, use a.ii.) mathematical models which are a.iii.) verified (falsified) in experiments that check the predictions of the model - with great success:

“The enormous usefulness of mathematics in the natural sciences is something bordering on the mysterious. There is no rational explanation for it.”; [2].

“The enormous usefulness of mathematics in the natural sciences is something bordering on the mysterious. There is no rational explanation for it.”; [2].

b.) Random

Often the nature of the object b.i.) being analyzed is in principle unknown. Only statistical evaluations of sets of outcomes of single observations/experiments can be used to estimate b.ii.) the underlying model, and b.iii.) test it against more empirical data. Often the approach taken in the fields of social sciences, medicine, and business.

c.) Complex